用房价预测的一个具体案例说明机器学习的全过程

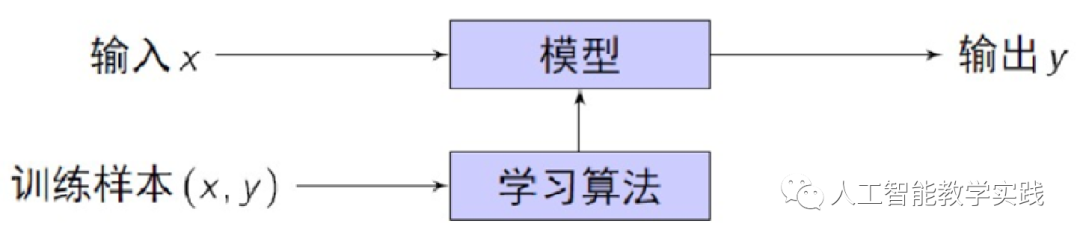

计算机从给定的数据中学习规律,即从观测数据(样本)中寻找规律、建立模型,并利用学习到的规律(模型)对未知或无法观测的数据进行预测。

我们以房价预测为例,来说明机器学习的全过程。

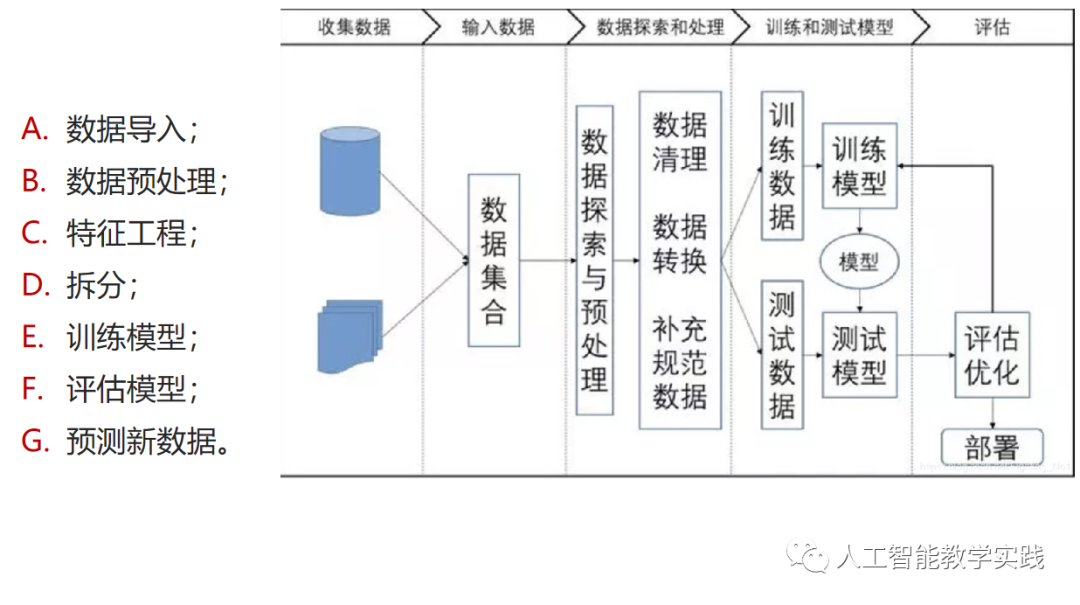

1-数据收集和准备:

首先,我们需要收集相关的房屋数据,包括房屋的特征(如面积、卧室数量、地理位置等)以及对应的销售价格。

接着,对数据进行清洗和预处理,包括处理缺失值、异常值,进行特征选择和特征工程等。

2-数据划分:

将数据集划分为训练集和测试集。通常,我们将大部分数据用于训练模型,少部分数据用于评估模型性能。

3-模型选择和训练:

根据任务的特点和需求,选择适合的回归模型,如线性回归、决策树回归等。

使用训练集来训练模型,模型通过学习训练数据中的模式和关系来建立预测函数。

4-模型评估:

使用测试集来评估已训练的模型的性能。比较模型预测结果与真实房价标签之间的差异。

根据评估指标(如均方误差、决定系数等)来判断模型的预测能力。

5-模型调优和改进:

根据评估结果,对模型进行调优和改进。可以尝试更换不同的特征、调整模型参数等来提升预测性能。

6-预测和应用:

当模型经过调优后,可以使用该模型来进行房价预测。给定一组未知房屋特征,模型可以预测出相应的房价。

7-持续监测和更新:

一旦将模型应用到实际情况中,我们需要持续监测模型的表现,并根据新的数据进行模型更新和改进,以确保模型的持续有效性。

以上就是机器学习的典型全过程。通过数据收集和准备、数据划分、模型选择和训练、模型评估、模型调优和改进、预测和应用,以及持续监测和更新等步骤,我们可以构建一个能够预测房价的机器学习模型。当然,具体的应用场景和任务可能会有所不同,但这个过程基本上适用于大多数机器学习问题。

# 导入类库

import numpy as np

from numpy import arange

from matplotlib import pyplot

from pandas import read_csv

from pandas import set_option

from pandas.plotting import scatter_matrix

from sklearn.preprocessing import StandardScaler

from sklearn.model_selection import train_test_split

from sklearn.model_selection import KFold

from sklearn.model_selection import cross_val_score

from sklearn.model_selection import GridSearchCV

from sklearn.linear_model import LinearRegression

from sklearn.linear_model import Lasso

from sklearn.linear_model import ElasticNet

from sklearn.tree import DecisionTreeRegressor

from sklearn.neighbors import KNeighborsRegressor

from sklearn.svm import SVR

from sklearn.pipeline import Pipeline

from sklearn.ensemble import RandomForestRegressor

from sklearn.ensemble import GradientBoostingRegressor

from sklearn.ensemble import ExtraTreesRegressor

from sklearn.ensemble import AdaBoostRegressor

from sklearn.metrics import mean_squared_error

# 导入数据

filename = 'housing.csv'

names = ['CRIM', 'ZN', 'INDUS', 'CHAS', 'NOX', 'RM', 'AGE', 'DIS',

'RAD', 'TAX', 'PRTATIO', 'B', 'LSTAT', 'MEDV']

dataset = read_csv(filename, names=names, delim_whitespace=True)

# 数据维度

print(dataset.shape)

# 特征熟悉的字段类型

print(dataset.dtypes)

# 查看最开始的30条记录

# set_option('display.line_width', 120)

set_option('display.width', 120)

print(dataset.head(30))

# 描述性统计信息

# set_option('precision', 1)

# print(dataset.describe())

# 关联关系

# set_option('precision', 2)

# print(dataset.corr(method='pearson'))

# 直方图

dataset.hist(sharex=False, sharey=False, xlabelsize=1, ylabelsize=1)

pyplot.show()

# 密度图

dataset.plot(kind='density', subplots=True, layout=(4,4), sharex=False, fontsize=1)

pyplot.show()

# 箱线图

dataset.plot(kind='box', subplots=True, layout=(4,4), sharex=False, sharey=False, fontsize=8)

pyplot.show()

# 散点矩阵图

scatter_matrix(dataset)

pyplot.show()

# 相关矩阵图

fig = pyplot.figure()

ax = fig.add_subplot(111)

cax = ax.matshow(dataset.corr(), vmin=-1, vmax=1, interpolation='none')

fig.colorbar(cax)

ticks = np.arange(0, 14, 1)

ax.set_xticks(ticks)

ax.set_yticks(ticks)

ax.set_xticklabels(names)

ax.set_yticklabels(names)

pyplot.show()

# 分离数据集

array = dataset.values

X = array[:, 0:13]

Y = array[:, 13]

validation_size = 0.2

seed = 7

X_train, X_validation, Y_train, Y_validation = train_test_split(X, Y,test_size=validation_size, random_state=seed)

# 评估算法 - 评估标准

num_folds = 10

seed = 7

scoring = 'neg_mean_squared_error'

# 评估算法 - baseline

models = {}

models['LR'] = LinearRegression()

models['LASSO'] = Lasso()

models['EN'] = ElasticNet()

models['KNN'] = KNeighborsRegressor()

models['CART'] = DecisionTreeRegressor()

models['SVM'] = SVR()

# 评估算法

results = []

for key in models:

kfold = KFold(n_splits=num_folds, random_state=seed,shuffle=True)

cv_result = cross_val_score(models[key], X_train, Y_train, cv=kfold, scoring=scoring)

results.append(cv_result)

print('%s: %f (%f)' % (key, cv_result.mean(), cv_result.std()))

#评估算法 - 箱线图

fig = pyplot.figure()

fig.suptitle('Algorithm Comparison')

ax = fig.add_subplot(111)

pyplot.boxplot(results)

ax.set_xticklabels(models.keys())

pyplot.show()

# 评估算法 - 正态化数据

pipelines = {}

pipelines['ScalerLR'] = Pipeline([('Scaler', StandardScaler()), ('LR', LinearRegression())])

pipelines['ScalerLASSO'] = Pipeline([('Scaler', StandardScaler()), ('LASSO', Lasso())])

pipelines['ScalerEN'] = Pipeline([('Scaler', StandardScaler()), ('EN', ElasticNet())])

pipelines['ScalerKNN'] = Pipeline([('Scaler', StandardScaler()), ('KNN', KNeighborsRegressor())])

pipelines['ScalerCART'] = Pipeline([('Scaler', StandardScaler()), ('CART', DecisionTreeRegressor())])

pipelines['ScalerSVM'] = Pipeline([('Scaler', StandardScaler()), ('SVM', SVR())])

results = []

for key in pipelines:

kfold = KFold(n_splits=num_folds, random_state=seed,shuffle=True)

cv_result = cross_val_score(pipelines[key], X_train, Y_train, cv=kfold, scoring=scoring)

results.append(cv_result)

print('%s: %f (%f)' % (key, cv_result.mean(), cv_result.std()))

#评估算法 - 箱线图

fig = pyplot.figure()

fig.suptitle('Algorithm Comparison')

ax = fig.add_subplot(111)

pyplot.boxplot(results)

ax.set_xticklabels(models.keys())

pyplot.show()

# 调参改进算法 - KNN

scaler = StandardScaler().fit(X_train)

rescaledX = scaler.transform(X_train)

param_grid = {'n_neighbors': [1, 3, 5, 7, 9, 11, 13, 15, 17, 19, 21]}

model = KNeighborsRegressor()

kfold = KFold(n_splits=num_folds, random_state=seed,shuffle=True)

grid = GridSearchCV(estimator=model, param_grid=param_grid, scoring=scoring, cv=kfold)

grid_result = grid.fit(X=rescaledX, y=Y_train)

print('最优:%s 使用%s' % (grid_result.best_score_, grid_result.best_params_))

cv_results = zip(grid_result.cv_results_['mean_test_score'],

grid_result.cv_results_['std_test_score'],

grid_result.cv_results_['params'])

for mean, std, param in cv_results:

print('%f (%f) with %r' % (mean, std, param))

# 集成算法

ensembles = {}

ensembles['ScaledAB'] = Pipeline([('Scaler', StandardScaler()), ('AB', AdaBoostRegressor())])

ensembles['ScaledAB-KNN'] = Pipeline([('Scaler', StandardScaler()),

('ABKNN', AdaBoostRegressor(base_estimator=KNeighborsRegressor(n_neighbors=3)))])

ensembles['ScaledAB-LR'] = Pipeline([('Scaler', StandardScaler()), ('ABLR', AdaBoostRegressor(LinearRegression()))])

ensembles['ScaledRFR'] = Pipeline([('Scaler', StandardScaler()), ('RFR', RandomForestRegressor())])

ensembles['ScaledETR'] = Pipeline([('Scaler', StandardScaler()), ('ETR', ExtraTreesRegressor())])

ensembles['ScaledGBR'] = Pipeline([('Scaler', StandardScaler()), ('RBR', GradientBoostingRegressor())])

results = []

for key in ensembles:

kfold = KFold(n_splits=num_folds, random_state=seed,shuffle=True)

cv_result = cross_val_score(ensembles[key], X_train, Y_train, cv=kfold, scoring=scoring)

results.append(cv_result)

print('%s: %f (%f)' % (key, cv_result.mean(), cv_result.std()))

# 集成算法 - 箱线图

fig = pyplot.figure()

fig.suptitle('Algorithm Comparison')

ax = fig.add_subplot(111)

pyplot.boxplot(results)

ax.set_xticklabels(ensembles.keys())

pyplot.show()

# 集成算法GBM - 调参

scaler = StandardScaler().fit(X_train)

rescaledX = scaler.transform(X_train)

param_grid = {'n_estimators': [10, 50, 100, 200, 300, 400, 500, 600, 700, 800, 900]}

model = GradientBoostingRegressor()

kfold = KFold(n_splits=num_folds, random_state=seed,shuffle=True)

grid = GridSearchCV(estimator=model, param_grid=param_grid, scoring=scoring, cv=kfold)

grid_result = grid.fit(X=rescaledX, y=Y_train)

print('最优:%s 使用%s' % (grid_result.best_score_, grid_result.best_params_))

# 集成算法ET - 调参

scaler = StandardScaler().fit(X_train)

rescaledX = scaler.transform(X_train)

param_grid = {'n_estimators': [5, 10, 20, 30, 40, 50, 60, 70, 80]}

model = ExtraTreesRegressor()

kfold = KFold(n_splits=num_folds, random_state=seed)

grid = GridSearchCV(estimator=model, param_grid=param_grid, scoring=scoring, cv=kfold)

grid_result = grid.fit(X=rescaledX, y=Y_train)

print('最优:%s 使用%s' % (grid_result.best_score_, grid_result.best_params_))

#训练模型

scaler = StandardScaler().fit(X_train)

rescaledX = scaler.transform(X_train)

gbr = ExtraTreesRegressor(n_estimators=80)

gbr.fit(X=rescaledX, y=Y_train)

# 评估算法模型

rescaledX_validation = scaler.transform(X_validation)

predictions = gbr.predict(rescaledX_validation)

print(mean_squared_error(Y_validation, predictions))