Windows CPU部署llama2量化模型并实现API接口

模型部署

从huggingface下载模型

https://huggingface.co/

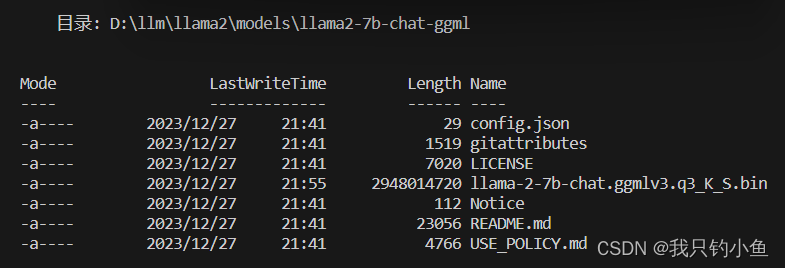

放在本地文件夹,如下

本地运行llama2

from ctransformers import AutoModelForCausalLM

llm = AutoModelForCausalLM.from_pretrained("D:\llm\llama2\models\llama2-7b-chat-ggml", model_file = 'llama-2-7b-chat.ggmlv3.q3_K_S.bin')

print(llm('<s>Human: 介绍一下中国\n</s><s>Assistant: '))

使用fastapi实现API接口

服务端

import uvicorn

from fastapi import FastAPI

from pydantic import BaseModel

from ctransformers import AutoModelForCausalLM

# 参考 https://blog.csdn.net/qq_36187610/article/details/131835752

app = FastAPI()

class Query(BaseModel):

text: str

@app.post("/chat/")

async def chat(query: Query):

input = query.text

llm = AutoModelForCausalLM.from_pretrained("D:\llm\llama2\models\llama2-7b-chat-ggml", model_file = 'llama-2-7b-chat.ggmlv3.q3_K_S.bin')

output = llm('<s>Human: ' + input + '\n</s><s>Assistant: ')

print(output)

return {"result": output}

if __name__ == "__main__":

uvicorn.run(app, host="0.0.0.0", port=6667)

客户端

import requests

url = "http://192.168.3.16:6667/chat/" # 注意这里ip地址不能使用0.0.0.0,而是使用实际IP地址,通过ipconfig可以查看

query = {"text": "你好,请做一段自我介绍,使用中文回答,不能超过100个字。"}

response = requests.post(url, json=query)

if response.status_code == 200:

result = response.json()

print("BOT:", result["result"])

else:

print("Error:", response.status_code, response.text)

常用git仓库

https://github.com/marella/ctransformers

https://github.com/FlagAlpha/Llama2-Chinese

https://github.com/tiangolo/fastapi